Creating a Neural Network from scratch can take a person days, if not weeks. To ease up this process, developers who are short on resources and time use neural network frameworks. Many such frameworks can be used but the really popular ones, currently, are PyTorch and TensorFlow.

No doubt, both of these are excellent frameworks that simplify the implementation of large scale Deep Neural Network models; nonetheless, there is always the decision of which one to use. This decision is not an easy one as both of these frameworks have their perks.

In this article, we will compare these two frameworks, TensorFlow and PyTorch, based on the factors below:

- Popularity

- Documentation and Sources

- Learning Curve

- Graph Construction

- Debugging

- Visualization

- Serialization

- Deployment

Popularity

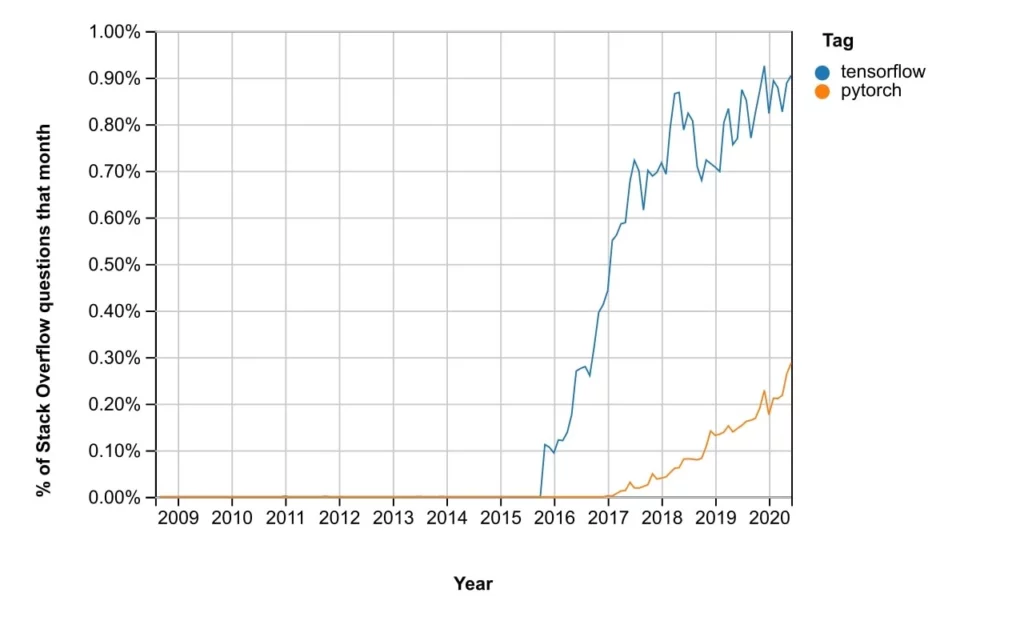

Stack Overflow

Today, most of the queries of developers are answered on Stack Overflow as it is the most trusted community. The statistics from Stack Overflow are as below:

From the graph above, we can see that most of the questions posed by developers, compared to PyTorch, in the last few years have been about TensorFlow. The reason for this being the year late release of PyTorch. It can be observed that after the release of PyTorch in late 2016, the graph of TensorFlow has started to flatten a bit while the graph of PyTorch is rising continuously.

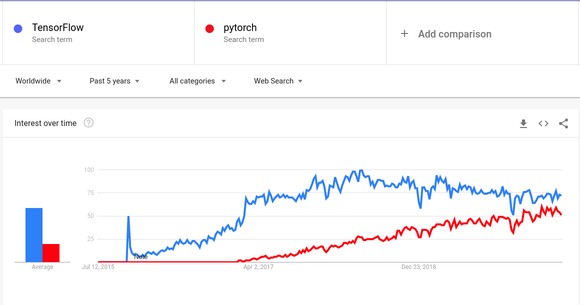

Google Trends

From the above graph, we can see that after the release of PyTorch, its popularity has been rising continuously and according to the trend, it may soon overtake TensorFlow. These graphs depend on how much people search google for content related to either of these frameworks.

We cannot say that TensorFlow has an overwhelming win in popularity because PyTorch is rising quickly but still, presently, TensorFlow is more popular than Pytorch.

Winner: TensorFlow

Documentation and Sources

The official websites of both TensorFlow and PyTorch have very clear documentation and have more than enough tutorials for any issues that we might have. Since PyTorch is a bit more recent than TensorFlow, the amount of unofficial material for it is less than TensorFlow. Even then, the tutorials and pre-trained models that are available overall are more than enough.

We could say that if you rely solely on online support, TensorFlow might have a little more variety in the material provided.

Winner: Tie

Learning Curve

PyTorch overall is very simple and has NumPy as a prerequisite. Thus its learning curve is pretty gentle as there are not many new concepts to grasp before starting development.

TensorFlow on the other hand requires some new concepts such as variable scoping, place holders, and sessions. These restrictions require some extra boilerplate code for TensorFlow projects but it is not that extensive.

import tensorflow as tf

x = tf.placeholder("float", None)

y = x * 2

with tf.Session() as session:

result = session.run(y, feed_dict={x: [1, 2, 3]})

import torch as t

x = t.Tensor([1, 2, 3])

result = x * 2

We might say that PyTorch has the upper hand here due to its gentle learning curve.

Winner: PyTorch

Graph Construction

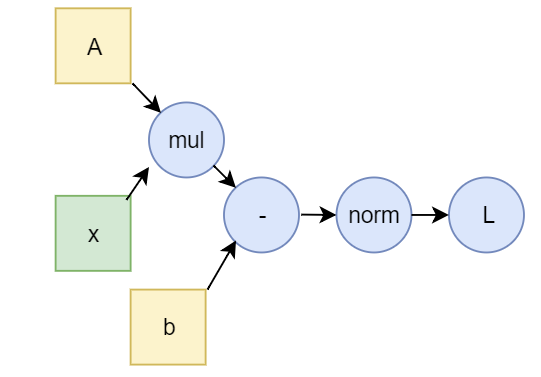

Both PyTorch and TensorFlow view models as a Directed Acyclic Graph (DAG). These are called computational graphs. The difference occurs when these graphs have to be created.

TensorFlow creates this graph statically, at compile-time, while PyTorch creates it dynamically. This means that, before running the model, TensorFlow constructs the graph. The model communicates with the external environment through the session object and the placeholders. The placeholders are Tensors which provide the model with data from outside the model while the session holds all intermediate values of the calculations which are being performed as instructed by the graph.

PyTorch is much more simple in the sense that the graph is constructed dynamically for each feed-forward. You do not have to bother with Placeholders or Sessions thus giving a more native feel with Python.

In TensorFlow, this results in difficulties when variable lengths of data have to be fed to the model over every iteration as in Natural Language Processing using RNNs. However, in PyTorch, the construction of the graph on every iteration also has some overhead. We could say that overall, due to the dynamic nature of the graph construction in PyTorch, it has a slight edge over TensorFlow in this field.

Winner: PyTorch

Debugging

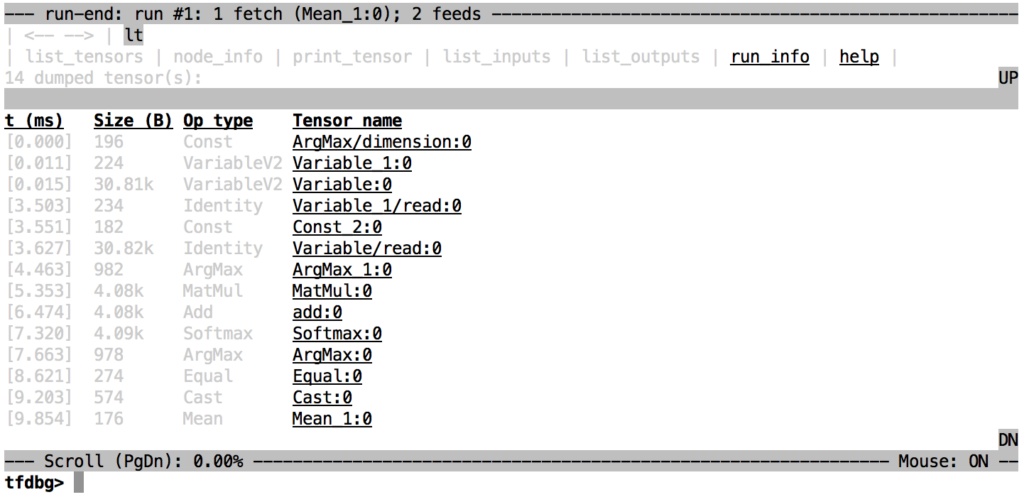

In PyTorch, we can use any standard python debugger e.g pdb, ipdb, etc, and get the values we want to monitor. Due to the static nature of the computational graph in TensorFlow, we will have to learn a separate debugger, tfdbg, which will be used to monitor only the model variables and placeholders.

PyTorch is better in the sense that it does not require us to learn an extra debugger.

Winner: PyTorch

Visualization

TensorBoard is a tool which can be used with TensorFlow. This is a brilliant tool which allows us to view our models directly into the browser window. If you have 2 consecutive runs of models with different hyper-parameters, TensorBoard can show the differences of both of them simultaneously. To view the differences between models in Pytorch, you have to use Matplotlib or Seaborn as there is no native viewing tool available.

Winner: TensorFlow

Serialization

Saving and loading models with both frameworks is pretty easy. In PyTorch, we can save the weights of the model or save the whole model if we want and then load it later to make predictions. On the other hand, in TensorFlow, due to the static nature of its graph, its graph can be saved which can then be loaded into environments that are not using Python but rather using C++ or JAVA according to requirements. This is particularly useful when Python is not an option.

Winner: TensorFlow

Deployment

TensorFlow has a framework for deployment called TensorFlow Serving which deploys models on a specialized gRPC server. On the other hand, Pytorch models have to be wrapped into an API using Flask or Django so that it can be made available from a server.

Winner: TensorFlow

Conclusion

| PyTorch | TensorFlow | |

|---|---|---|

| Release Date | October 2016 | November 2015 |

| Primary Developers | ||

| Operating Systems | Linux, Windows, Mac OS | Linux, Windows, Mac OS |

| Popularity | Rising Quickly | Comparatively More |

| Community | Comparatively Small | Large |

| Learning Curve | Gentle | Steep |

| Computational Graph | Dynamic | Static |

| Debugging | pdb, ipdb | tfdbg |

| Visualization | Matplotlib, seaborn | TensorBoard |

| Serialization | Save model Weights, State | Save Computational Graph |

| Deployment | Flask, Django | TensorFlow Serving |

Overall, there is no best framework that one can start with. There are pros and cons to both. As it can be seen in the summary above, PyTorch is rising with great speed nowadays and is more user friendly. While you get to have more control over your model while using TensorFlow. TensorFlow also has amazing tools like TensorFlow Serving and TensorBoard which are not available in PyTorch.

It is a personal choice for anyone using either of these frameworks but I would personally recommend PyTorch for the simple reason of having a gentle learning curve. If a task seems to be out of scope for PyTorch then you might move to TensorFlow but until then, PyTorch is sufficient.